Xu Hao makes use of chain of thought and basic data prompting with ChatGPT when writing self-testing code

My account of an inside chat with Xu Hao, the place he reveals how he

drives ChatGPT to provide helpful self-tested code. His preliminary immediate primes

the LLM with an implementation technique (chain of thought prompting). His

immediate additionally asks for an implementation plan moderately than code (basic

data prompting). As soon as he has the plan he makes use of it to refine the

implementation and generate helpful sections of code.

Lately I watched an interesting name on Zoom. Xu Hao, Thoughtworks’s

Head of Expertise in China, defined his explorations in utilizing ChatGPT to

assist construct Self Testing Code. He

went by the sort of interplay that works for him.

He begins with a immediate that units the context for the appliance and the way

he desires the code to be structured.

The present system is a web based whiteboard system. Tech stack: typescript, react, redux, konvajs and react-konva. And vitest, react testing library for mannequin, view mannequin and associated hooks, cypress part exams for view. All codes must be written within the tech stack talked about above. Necessities must be carried out as react elements within the MVVM structure sample. There are 2 sorts of view mannequin within the system. 1. Shared view mannequin. View mannequin that represents states shared amongst native and distant customers. 2. Native view mannequin. View mannequin that represents states solely relevant to native person Listed below are the frequent implementation technique: 1. Shared view mannequin is carried out as Redux retailer slice. Examined in vitest. 2. Native view mannequin is carried out as React part props or states(by useState hook), until for world native view mannequin, which can also be carried out as Redux retailer slice. Examined in vitest. 3. Hooks are used as the foremost view helpers to retrieve knowledge from shared view mannequin. For many the case, it can use ‘createSelector’ and ‘useSelector’ for memorization. Examined in vitest and react testing library. 4. Don’t dispatch motion instantly to vary the states of shared view mannequin, use an encapsulated view mannequin interface as a substitute. Within the interface, every redux motion is mapped to a way. Examined in vitest. 5. View is encompass konva shapes, and carried out as react part through react-konva. Examined in cypress part exams Listed below are sure patterns must be adopted when implement and check the part 1. When write check, use `describe` as a substitute of `check` 2. Information-driven exams are most well-liked. 3. When check the view part, faux view mannequin through the view mannequin interface Consciousness Layer Requirement: Show different customers’ consciousness information(cursor, identify and on-line info) on the whiteboard. AC1: Don’t show native person AC2: When distant person adjustments cursor location, show the change in animation. Present an total resolution following the steerage talked about above. Trace, hold all consciousness info in a Konva layer, and an consciousness information part to render cursor, and identify. Don’t generate code. Describe the answer, and breaking the answer down as a process listing based mostly on the steerage talked about above. And we'll refer this process listing as our grasp plan.

There’s quite a bit happening with this immediate, so he highlighted a number of

factors.

He is utilizing a generic utility instance in right here: one factor to be

cautious of when interacting with ChatGPT and the like is that we must always by no means

put something that could be confidential into the immediate, as that will be a

safety threat. Enterprise guidelines, any code from an actual mission – all these should

not enter the interplay with ChatGPT.

Many of the immediate is setting out the design pointers that he desires

ChatGPT’s generated code to observe. He refined this by placing prompts into

ChatGPT, wanting on the consequence, and crafting the immediate to make it generate

within the model he wished. As soon as he has context working, he can paste it into

each session he has with ChatGPT, and share it with the remainder of the

workforce.

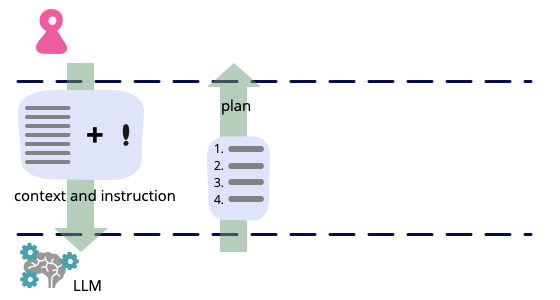

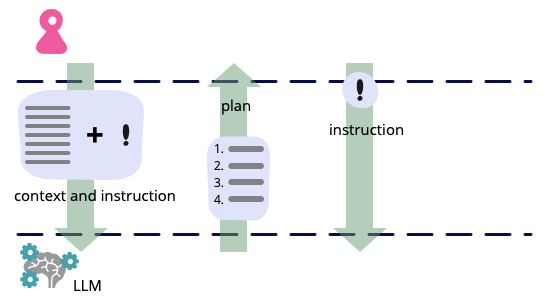

Xu Hao begins his session by prompting the

ChatGPT LLM with context in addition to the instruction to generate a plan

Regardless that the ultimate purpose of this session is to generate code and its

exams, at this stage he particularly stated Don’t generate code.

At this level he desires ChatGPT to stipulate its strategy earlier than we glance to any

code technology. It is a immediate method known as Generated Data.

A typical means of interacting with an LLM is to present it an issue and ask

it to generate some last product, whether or not than be prose or (on this case)

code. With Generated Data we break up our interplay into separate steps:

first ask the LLM to generate some helpful details about the issue, and

then we feed that info again into the LLM to generate the ultimate

product.

Essentially the most fascinating a part of the immediate is the “implementation technique”

part which mixes two kinds of immediate:

- Instruction prompting offers directions for the LLM to observe

- Chain of Thought prompting encourages the LLM to clarify its

reasoning.

Within the Implementation Technique part, Xu Hao described the specified

structure sample as an anticipated “Chain of Thought directions” for

ChatGPT to observe. Then he instructed ChatGPT to construct a process listing (the

generated data) based mostly on this chain of thought.

Answer:

To implement the notice layer function, we will observe the rules talked about in the issue assertion and use the present tech stack.

Process Checklist:

-

Create a brand new part named “AwarenessInfo” to render cursor and identify for distant customers.

-

Create a brand new Konva layer to carry all of the “AwarenessInfo” elements.

-

Add the Konva layer to the primary whiteboard part.

-

Create a brand new Redux slice to retailer the notice info for distant customers.

-

Create an encapsulated view mannequin interface for the notice Redux slice.

-

Implement the “AwarenessInfo” part to retrieve and show the notice info for every distant person from the view mannequin.

-

Implement a way within the encapsulated view mannequin interface to replace the notice info for every distant person.

-

When a distant person adjustments the cursor location, dispatch the replace technique within the encapsulated view mannequin interface to replace the corresponding consciousness info.

-

Within the “AwarenessInfo” part, use the brand new location to animate the cursor change.

-

Write exams for the brand new “AwarenessInfo” part, together with data-driven exams to make sure it correctly shows the notice info for all distant customers.

-

Write exams for the encapsulated view mannequin interface to make sure it correctly updates the notice info for distant customers.

-

Write end-to-end Cypress exams to make sure the notice layer is correctly displayed and up to date when distant customers change their cursor location.

By following these duties, we will implement the notice layer function within the on-line whiteboard system.

The response from ChatGPT is non-deterministic, so this result’s simply

one run. If you happen to (or I) put this immediate in once more, we’ll get a distinct response.

The important thing component of the response is an ordered process listing, with numbers for

every step. We are able to use these numbers to refer to those duties for the remainder of

the session.

The LLM responds with a plan.

Xu Hao can now take a look at the grasp plan and see if it is smart. If

there are issues with it, he can ask ChatGPT to re-write a number of the duties

or your entire grasp plan by offering extra info.

For instance, the present grasp plan was not grouped by differing types

of elements. One doable immediate is perhaps:

Rewrite the grasp plan, and group duties as mannequin, view mannequin and examine.

This could possibly be helpful to confirm the alignment between the structure

imaginative and prescient and grasp plan.

These tweaks could possibly be added to the preliminary immediate to get an total

higher response.For this explicit immediate, the next instruction was

not within the preliminary immediate, however had been added in later iteration:

4. Don’t dispatch motion instantly to vary the states of shared view

mannequin, use an encapsulated view mannequin interface as a substitute. Within the interface,

every redux motion is mapped to a way. Examined in vitest.

As soon as the grasp plan appears to be like affordable, Xu Hao asks to regenerate it with

names for this system components.

Rewrite the grasp plan, this time with detailed part names,

strategies, and props

Certain, this is a revised grasp plan with detailed part names, strategies, and props:

Process Checklist:

-

Create a brand new part named “AwarenessInfo” to render cursor and identify for distant customers. This part will obtain props akin to person identify, cursor place, and on-line standing.

-

Create a brand new Konva layer named “AwarenessLayer” to carry all of the “AwarenessInfo” elements.

-

Add the “AwarenessLayer” to the primary whiteboard part.

-

Create a brand new Redux slice named “consciousness” to retailer the notice info for distant customers.

-

Create an encapsulated view mannequin interface named “AwarenessViewModel” for the “consciousness” Redux slice. This interface will include a way named “updateUserAwarenessInfo” to replace the notice info for every distant person.

-

Implement the “AwarenessInfo” part to retrieve and show the notice info for every distant person from the view mannequin. The part will use the “useSelector” hook to retrieve the related info.

-

Implement the “updateUserAwarenessInfo” technique within the “AwarenessViewModel” interface to dispatch a Redux motion to replace the notice info for every distant person. This technique can be known as by the “MainWhiteboard” part.

-

When a distant person adjustments the cursor location, dispatch the “updateUserAwarenessInfo” technique within the “AwarenessViewModel” interface to replace the corresponding consciousness info.

-

Within the “AwarenessInfo” part, use the brand new location to animate the cursor change. This may be completed utilizing the Konva “Tween” animation.

-

Write exams for the brand new “AwarenessInfo” part, together with data-driven exams to make sure it correctly shows the notice info for all distant customers. The exams can be written utilizing the React Testing Library.

-

Write exams for the encapsulated view mannequin interface to make sure it correctly updates the notice info for distant customers. These exams will use the Vitest framework.

-

Write end-to-end Cypress exams to make sure the notice layer is correctly displayed and up to date when distant customers change their cursor location. The exams will simulate person interactions with the whiteboard and confirm the state of the “AwarenessLayer” and “AwarenessInfo” elements.

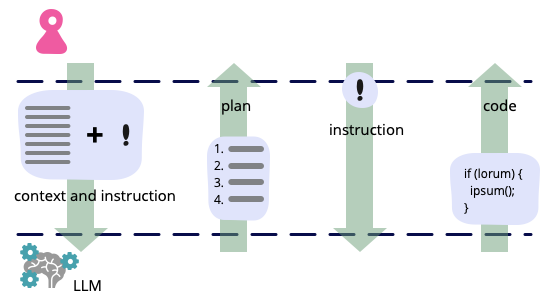

At this level, Xu Hao is able to ask ChatGPT to offer code for one among

the steps

present me instance exams and implementation for the notice slice

and AwarenessViewModel. Take a look at first.

Certain, let's begin with writing exams for the notice slice and AwarenessViewModel:

Take a look at for Consciousness Slice:

describe('consciousness slice', () =>

let retailer: MockStore;

beforeEach(() =>

retailer = configureMockStore()(initialState);

);

it('ought to set the cursor location', () =>

retailer.dispatch(setCursorLocation( x: 100, y: 200 ));

const state = retailer.getState().consciousness;

anticipate(state.cursorLocation).toEqual( x: 100, y: 200 );

);

it('ought to set the identify', () =>

retailer.dispatch(setName('John Doe'));

const state = retailer.getState().consciousness;

anticipate(state.identify).toBe('John Doe');

);

it('ought to set the web standing', () =>

retailer.dispatch(setOnline(true));

const state = retailer.getState().consciousness;

anticipate(state.isOnline).toBe(true);

);

);

Take a look at for AwarenessViewModel:

describe('AwarenessViewModel', () =>

let retailer: MockStore;

let awarenessViewModel: AwarenessViewModel;

beforeEach(() =>

retailer = configureMockStore()(initialState);

awarenessViewModel = new AwarenessViewModel(retailer);

);

it('ought to set the cursor location', () =>

awarenessViewModel.setCursorLocation( x: 100, y: 200 );

const state = retailer.getState().consciousness;

anticipate(state.cursorLocation).toEqual( x: 100, y: 200 );

);

it('ought to set the identify', () =>

awarenessViewModel.setName('John Doe');

const state = retailer.getState().consciousness;

anticipate(state.identify).toBe('John Doe');

);

it('ought to set the web standing', () =>

awarenessViewModel.setOnline(true);

const state = retailer.getState().consciousness;

anticipate(state.isOnline).toBe(true);

);

);

Now, let's implement the Consciousness slice and AwarenessViewModel:

Consciousness slice:

interface AwarenessState

cursorLocation: x: quantity; y: quantity ;

identify: string;

isOnline: boolean;

const initialState: AwarenessState =

cursorLocation: x: 0, y: 0 ,

identify: '',

isOnline: false,

;

const awarenessSlice = createSlice(

identify: 'consciousness',

initialState,

reducers:

setCursorLocation: (state, motion: PayloadAction) =>

state.cursorLocation = motion.payload;

,

setName: (state, motion: PayloadAction) =>

state.identify = motion.payload;

,

setOnline: (state, motion: PayloadAction) =>

state.isOnline = motion.payload;

,

,

);

export const setCursorLocation, setName, setOnline = awarenessSlice.actions;

export default awarenessSlice.reducer;

AwarenessViewModel:

class AwarenessViewModel

non-public retailer: Retailer;

constructor(retailer: Retailer)

this.retailer = retailer;

setCursorLocation(cursorLocation: x: quantity; y: quantity )

this.retailer.dispatch(setCursorLocation(cursorLocation));

setName(identify: string)

this.retailer.dispatch(setName(identify));

setOnline(isOnline: boolean)

this.retailer.dispatch(setOnline(isOnline));

Word: On this implementation, I assumed that the AwarenessViewModel is used to

set the values within the retailer. If additionally it is used to retrieve the values from the

retailer, we will add corresponding strategies to the AwarenessViewModel class

(I’ve eliminated the styling from ChatGPT’s response.)

General this code is neither dangerous nor good. It will not run because it stands.

It is lacking a configureMockStore and assumes it makes use of

redux-mock-store, which is not a part of the tech stack. Xu Hao can repair these

issues by asking the LLM to progressively tweak the code by a

sequence of rewrites. Here is one to get rig of redux-mock-store.

rewrite the exams with out redux-mock-store

Certain, this is an instance implementation of the exams for the AwarenessViewModel and the notice slice with out utilizing redux-mock-store:

import createAwarenessSlice, AwarenessState, updateAwareness from './awarenessSlice';

import AwarenessViewModel from './AwarenessViewModel';

describe('AwarenessViewModel', () => {

const mockDispatch = jest.fn();

const initialState: AwarenessState =

customers:

'user1':

identify: 'Consumer 1',

cursorPosition: x: 0, y: 0 ,

on-line: true,

,

'user2':

identify: 'Consumer 2',

cursorPosition: x: 100, y: 100 ,

on-line: true,

,

,

;

let viewModel: AwarenessViewModel;

beforeEach(() =>

viewModel = new AwarenessViewModel(initialState, mockDispatch);

);

describe('getUserNames', () =>

it('returns the listing of person names', () =>

const names = viewModel.getUserNames();

anticipate(names).toEqual(['User 1', 'User 2']);

);

);

describe('getCursorPosition', () =>

it('returns the cursor place for a given person ID', () =>

const cursorPosition = viewModel.getCursorPosition('user1');

anticipate(cursorPosition).toEqual( x: 0, y: 0 );

);

it('returns undefined for an unknown person ID', () =>

const cursorPosition = viewModel.getCursorPosition('unknown');

anticipate(cursorPosition).toBeUndefined();

);

);

describe('updateCursorPosition', () =>

it('dispatches the updateAwareness motion with the brand new cursor place', () =>

viewModel.updateCursorPosition('user1', x: 50, y: 50 );

anticipate(mockDispatch).toHaveBeenCalledWith(updateAwareness(

person: 'user1',

cursorPosition: x: 50, y: 50 ,

));

);

);

});

And this is an instance implementation of the awarenessSlice with out utilizing redux-mock-store:

import createSlice, PayloadAction from '@reduxjs/toolkit';

export sort CursorPosition = x: quantity, y: quantity ;

export sort UserAwareness =

identify: string;

cursorPosition: CursorPosition;

on-line: boolean;

;

export sort AwarenessState =

customers: File<string, UserAwareness>;

;

const initialState: AwarenessState =

customers: ,

;

const awarenessSlice = createSlice(

identify: 'consciousness',

initialState,

reducers:

updateAwareness: (state, motion: PayloadAction< person: string, cursorPosition: CursorPosition >) =>

const person, cursorPosition = motion.payload;

state.customers[user].cursorPosition = cursorPosition;

,

setOnline: (state, motion: PayloadAction< person: string, on-line: boolean >) =>

const person, on-line = motion.payload;

state.customers[user].on-line = on-line;

,

,

);

export const updateAwareness, setOnline = awarenessSlice.actions;

export const awarenessReducer = awarenessSlice.reducer;

Word that since we’re not utilizing redux-mock-store, we have to cross a mock dispatch operate to the view mannequin constructor, and within the check for updateCursorPosition we have to test that the proper motion was dispatched utilizing jest.fn().

Additional rewrites can match in additional changes, akin to asking it to

Use `configureTestingStore(‘consciousness’, initialState)` to initialize can get it to slot in with the workforce’s check idioms. Then

the check retailer

with some path changes and a small quantity of glue for the reducers, the

code can run simply fantastic.

As soon as that is working, Xu Hao can repeat the method for the remainder of the

duties within the grasp plan.

My take away from this dialogue was that utilizing chain of thought and

generated data prompting approaches is usually a considerably useful gizmo

for programming. Particularly it reveals that to make use of LLMs nicely, we have to

discover ways to assemble prompts to get one of the best outcomes. This expertise

means that it is helpful to work together with the LLM like a junior associate,

beginning them with architectural pointers, asking them to indicate their

reasoning, and tweaking their outputs as we go.